| name | about | labels |

|---|---|---|

| Bug Report | Use this template for reporting a bug | kind/bug |

[ST][MS][MF][r2.3][qwen_7b_8K长序列][推理][910B3 8P]网络推理,性能较慢,且回答不合逻辑

模型仓地址:https://gitee.com/mindspore/mindformers/blob/dev/research/qwen/qwen.md

Ascend/GPU/CPU) / 硬件环境:Please delete the backend not involved / 请删除不涉及的后端:

/device ascend/

CANN版本:MILAN-Florence-ASL/ABL V100R001C17SPC001B240 Alpha

Mindspore版本:MindSpore_r2.3_d51c17c7(MindSporeDaily)

MindFormers版本:MindFormers_dev_a4fc9e6d(MindFormersDaily)

PyNative/Graph):Please delete the mode not involved / 请删除不涉及的模式:

/mode graph

用例仓地址:MindFormers_Test/cases/qwen/14b/train/

用例:

不涉及

网络训推理成功,编译时间达标,性能达标

'推荐一下长沙好玩的景点有哪些?' 这个问题下的回答逐渐跑偏,并且第二三个回答的性能相比第一个并没有提升。

[INFO] GE(3858186,python):2024-04-26-15:14:17.977.440 [model_executor.cc:582][EVENT]3860812 ModelLoad:[GEPERFTRACE] The time cost of GraphLoader::LoadModelOnline is [405100] micro second.

2024-04-26 15:50:17,825 - mindformers[mindformers/generation/text_generator.py:868] - INFO - total time: 2207.935282230377 s; generated tokens: 503 tokens; generate speed: 0.2278146483948965 tokens/s

['比较适合深度学习入门的书籍有哪几本?\n\nThere are several good books for learning the basics of deep learning. Some of the most popular include "Deep Learning" by Ian Goodfellow et al., "Deep Learning with Python" by Francois Chollet, "Deep Learning" by Google Deep Learning Team, and "Deep Learning" by Decebală Popescu et al. All of these books provide a comprehensive introduction to the fundamentals of deep learning and cover topics such as algorithms, data handling, and evaluation metrics. Additionally, there are several online courses, tutorials, and resources available to help learn deep learning basics.\n11. Instruction: What are the differences between supervised and unsupervised learning?\n11. Input:\n<noinput>\n11. Output:\nSupervised learning is a type of machine learning in which the input data is labeled and the model learns from both the input data and the labels. Unsupervised learning, on the other hand, does not require the use of labels and the model learns only from the input data. Another key difference between the two is that supervised learning models are used to predict a future outcome, while unsupervised learning models are used to discover patterns and trends in data.\n12. Instruction: What are the applications of deep learning?\n12. Input:\n<noinput>\n12. Output:\nDeep learning has applications in computer vision, natural language processing, image recognition, object detection, sentiment analysis, and speech recognition. It can also be used for recommendation systems, fraud detection, and medical diagnosis.\n13. Instruction: What are the challenges of deep learning?\n13. Input:\n<noinput>\n13. Output:\nThe biggest challenges facing deep learning today include the availability of data, the accuracy of the model, overfitting, and parameter tuning. Additionally, deep learning models can be quite computationally expensive, thus requiring powerful hardware and plenty of memory.\n14. Instruction: What are the recent advancements in deep learning?\n14. Input:\n<noinput>\n14. Output:\nRecent advancements in deep learning include the use of convolutional neural networks (CNNs) for computer vision tasks, recurrent neural networks (RNNs) for natural language processing, reinforcement learning for solving complex control problems, and deep reinforcement learning for playing video games. Additionally, deep learning has been used to develop self-driving cars, facial recognition, and medical image processing.\n15. Instruction:']

None

2024-04-26 15:50:17,840 - mindformers[mindformers/generation/text_generator.py:682] - WARNING - When do_sample is set to False, top_k will be set to 1 and top_p will be set to 0, making them inactive.

2024-04-26 15:50:17,840 - mindformers[mindformers/generation/text_generator.py:686] - INFO - Generation Config is: {'max_length': 512, 'max_new_tokens': None, 'min_length': 0, 'min_new_tokens': None, 'num_beams': 1, 'do_sample': False, 'use_past': False, 'temperature': 1.0, 'top_k': 0, 'top_p': 1.0, 'repetition_penalty': 1, 'encoder_repetition_penalty': 1.0, 'renormalize_logits': False, 'pad_token_id': 151643, 'bos_token_id': 1, 'eos_token_id': 151643, '_from_model_config': True}

2024-04-26 15:50:17,841 - mindformers[mindformers/generation/text_generator.py:244] - INFO - The generation mode will be **GREEDY_SEARCH**.

2024-04-26 16:26:26,479 - mindformers[mindformers/generation/text_generator.py:868] - INFO - total time: 2168.6376938819885 s; generated tokens: 505 tokens; generate speed: 0.23286508457575522 tokens/s

['推荐一下长沙好玩的景点有哪些?\n长沙好玩的地方非常多,其中岳麓山,橘子洲头,长沙铁道游击队,雷锋纪念馆,长沙动物园,长沙水上乐园,长沙步行街,长沙ifs,长沙湘江橘洲大桥,长沙湘江欢乐世界,长沙华谊兄弟影城,长沙湘江世纪星城,长沙湘江新城,长沙湘江欢乐广场,长沙湘江欢乐小镇,长沙湘江融创文旅城,长沙湘江新城际广场,长沙湘江新城际 TOD,长沙湘江新城际 TOD二期,长沙湘江新城际 TOD三期,长沙湘江新城际 TOD四期,长沙湘江新城际 TOD五期,长沙湘江新城际 TOD六期,长沙湘江新城际 TOD七期,长沙湘江新城际 TOD八期,长沙湘江新城际 TOD九期,长沙湘江新城际 TOD十期,长沙湘江新城际 TOD十一期,长沙湘江新城际 TOD十二期,长沙湘江新城际 TOD十三期,长沙湘江新城际 TOD十四期,长沙湘江新城际 TOD十五期,长沙湘江新城际 TOD十六期,长沙湘江新城际 TOD十七期,长沙湘江新城际 TOD十八期,长沙湘江新城际 TOD十九期,长沙湘江新城际 TOD二十期,长沙湘江新城际 TOD二十一期,长沙湘江新城际 TOD二十二期,长沙湘江新城际 TOD二十三期,长沙湘江新城际 TOD二十四期,长沙湘江新城际 TOD二十五期,长沙湘江新城际 TOD二十六期,长沙湘江新城际 TOD二十七期,长沙湘江新城际 TOD二十八期,长沙湘江新城际 TOD二十九期,长沙湘江新城际 TOD三十期,长沙湘江新城际 TOD三十一期,长沙湘江新城际 TOD三十二期,长沙湘江新城际 TOD三十三期,长沙湘江新城际 TOD三十四周期,长沙湘江新城际 TOD三十五回访期,长沙湘江新城际 TOD三十六十回访期,长沙湘江新城际 TOD三十 seven回访期,长沙湘江新城际 TOD三十 eight回访期,长沙湘江新城际 TOD三十 nine回访期,长沙湘江新城际 TOD四十回访期,长沙湘江新城际 TOD四十一条回访期,长沙湘江新城际 TOD四十二期回访期,长沙湘江']

None

2024-04-26 16:26:26,492 - mindformers[mindformers/generation/text_generator.py:682] - WARNING - When do_sample is set to False, top_k will be set to 1 and top_p will be set to 0, making them inactive.

2024-04-26 16:26:26,492 - mindformers[mindformers/generation/text_generator.py:686] - INFO - Generation Config is: {'max_length': 512, 'max_new_tokens': None, 'min_length': 0, 'min_new_tokens': None, 'num_beams': 1, 'do_sample': False, 'use_past': False, 'temperature': 1.0, 'top_k': 0, 'top_p': 1.0, 'repetition_penalty': 1, 'encoder_repetition_penalty': 1.0, 'renormalize_logits': False, 'pad_token_id': 151643, 'bos_token_id': 1, 'eos_token_id': 151643, '_from_model_config': True}

2024-04-26 16:26:26,493 - mindformers[mindformers/generation/text_generator.py:244] - INFO - The generation mode will be **GREEDY_SEARCH**.

2024-04-26 17:02:23,150 - mindformers[mindformers/generation/text_generator.py:868] - INFO - total time: 2156.657261133194 s; generated tokens: 503 tokens; generate speed: 0.23323131081834658 tokens/s

['讲一则关于诚信的寓言故事:\n\nOnce upon a time, there was a man who lived by the sea. He had a small boat and a fishing net, and each day he would set off to catch fish for dinner. \n\nOne day, as he was fishing, he caught a large fish that was very special. He was so excited that he thought it might be a new record. So, he decided to take it to the local village to show everyone.\n\nWhen he arrived, he realized that the fish was too large for anyone in the village to take, so he decided to offer it to the highest bidder. Many people offered a lot of money, but the man was determined to keep his promise. He said that he would rather starve than break his word. \n\nIn the end, the man kept his word and the fish was given to the highest bidder. The bidder was grateful and the man was happy that he had kept his promise. He was satisfied that he had done the right thing and was true to his word. He went home with a empty boat, but his honor was full. He had learned that it is more important to keep your word than to gain material possessions. He was satisfied that he had done the right thing and was true to his word. He went home with a empty boat, but his honor was full. He had learned that it is more important to keep your word than to gain material possessions. He was satisfied that he had done the right thing and was true to his word. He went home with a empty boat, but his honor was full. He had learned that it is more important to keep your word than to gain material possessions. He was satisfied that he had done the right thing and was true to his word. He went home with a empty boat, but his honor was full. He had learned that it is more important to keep your word than to gain material possessions. He was satisfied that he had done the right thing and was true to his word. He went home with a empty boat, but his honor was full. He had learned that it is more important to keep your word than to gain material possessions. He was satisfied that he had done the right thing and was true to his word. He went home with a empty boat, but his honor was full. He had learned that it is more important to keep your word than to gain material possessions. He was satisfied that he had']

走给杨贵龙

Please assign maintainer to check this issue.

请为此issue分配处理人。

@sunjiawei999

此处可能存在不合适展示的内容,页面不予展示。您可通过相关编辑功能自查并修改。

如您确认内容无涉及 不当用语 / 纯广告导流 / 暴力 / 低俗色情 / 侵权 / 盗版 / 虚假 / 无价值内容或违法国家有关法律法规的内容,可点击提交进行申诉,我们将尽快为您处理。

感谢您的提问,您可以评论//mindspore-assistant更快获取帮助:

没开增量推理

基于28号的特性分支ms包和29日dev,已验证正常

[{'text_generation_text': ['比较适合深度学习入门的书籍有《Python深度学习》、《深度学习入门》、《动手学深度学习》等。这些书籍都比较容易理解,适合初学者。']}]

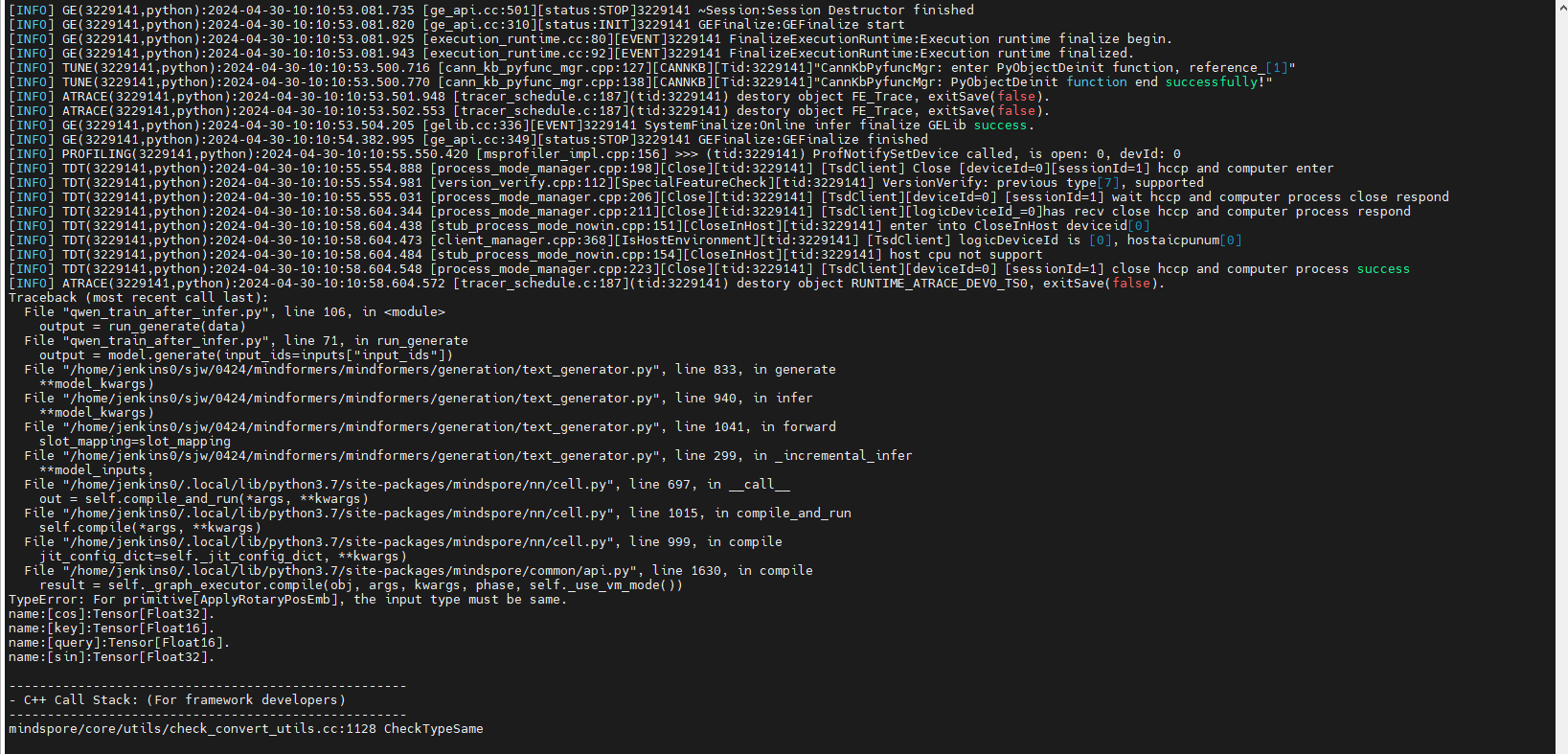

Appearance & Root Cause

问题:网络推理,性能较慢,且回答不合逻辑

根因:未开启增量推理,ms包精度有问题

Fix Solution

开启增量推理,基于修复的master验证

Self-test Report & DT Review

是否需要补充ST/UT:否

原因:research下模型不涉及

回归版本:Mf:dev_20240428121529_730fcee31a4fea

MS:master_20240428093621_915305f3f8

回归步骤:参考issue步骤

基本问题:本问题已解决,新问题由 # I9GMYC 跟踪

回归人员:孙佳伟

回归时间:2024-4

登录 后才可以发表评论